Subsidiary Project: 20175/30.10.2019, part of NETIO P_40_270 53/05.09.2016

Intelligent Agent for Recipe Recommendation Based on Visual Identification of Available Ingredients

Deployment Period: 30.10.2019 – 22.04.2021

Project Objectives

- Develop a software agent capable of recommending recipes to users based on what ingredients they have available in their fridge.

- Research, propose and validate methods for image-based recognition of ingredients placed on shelves of a fridge.

- Create semantic models of the knowledge in the project (ingredients, recipes, food categories, suppliers, etc.) for information querying and extraction.

- Build (deep learning based) recommender systems for recipes, analysing user interaction and feedback.

- Design and build a prototype smart-fridge which integrates the sub-systems proposed in the project.

Activities

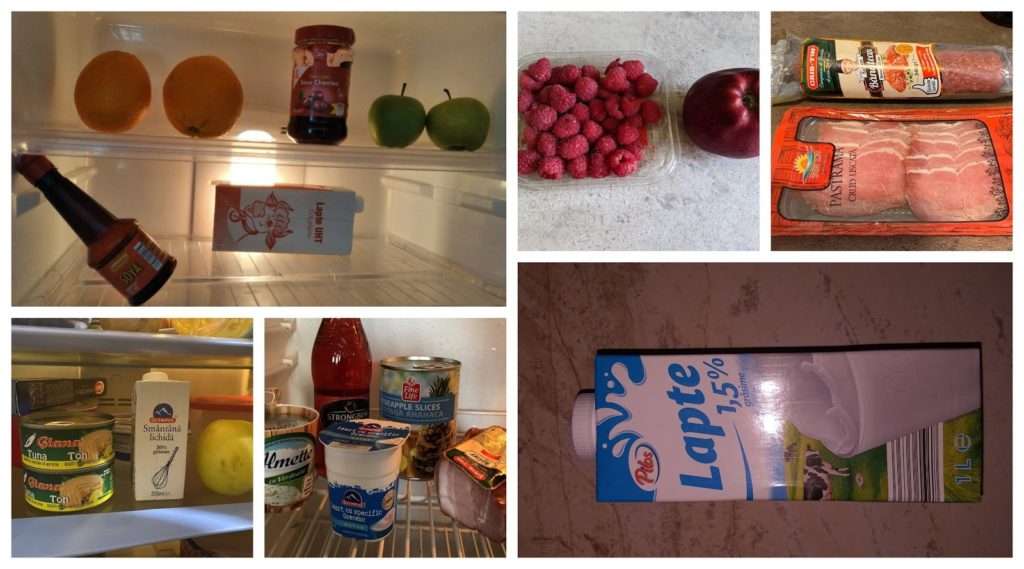

- Input: RGB cameras capture images from the shelves of a fridge.

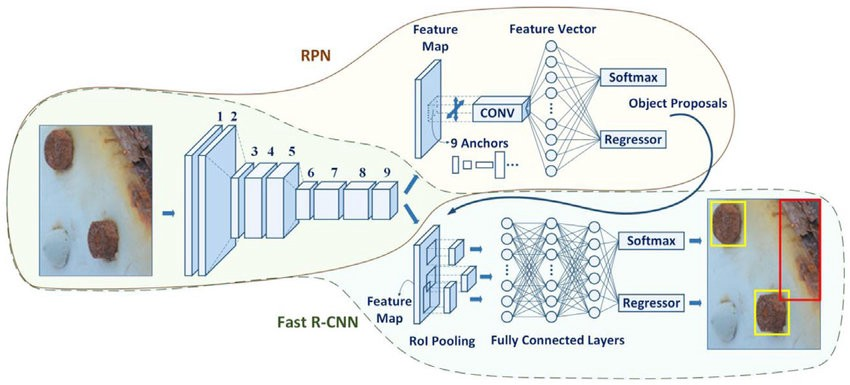

- Processing: Use deep learning architectures to identify the ingredients and estimate the quantity.

- Main challenge: No datasets with ingredients available. Most public datasets are of cooked food. Data scarcity does not allow robust training of such models.

- Solution: Build a problem specific dataset to use for fine-tuning after performing transfer learning

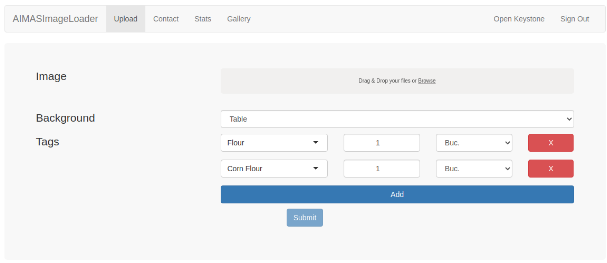

- We collected a dataset containing 4019 images

- Each image is tagged with the ingredients it contained, their quantities (value and unit of measurement), as well as how the ingredients were displayed (on the table or in the fridge).

- 41 people, members of the project team and volunteers, contributed to the creation of this dataset.

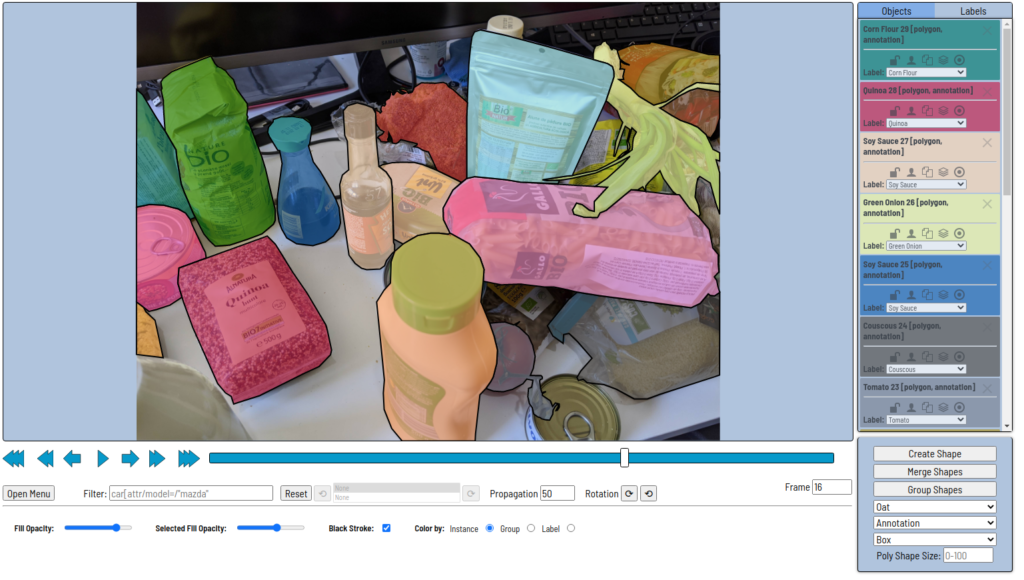

- The annotation of the data set collected using CVAT (Computer Vision Annotation Tool) was performed.

- We performed 80 annotation tasks. Each task contains 5 jobs with each job contains 10 images.

- Annotation was performed in two phases, annotation and validation, by 2 separate contributors in order to reduce error rates and increase the dataset quality.

- The segmentation process involved 24 people (21 volunteers and 3 members of the project team), and it also involved two stages.

- We converted the dataset extracted from CVAT using the COCO format.

- We decided to reduce the number of classes to 167.

- The resulting dataset was divided into 80% for training and 20% for testing.

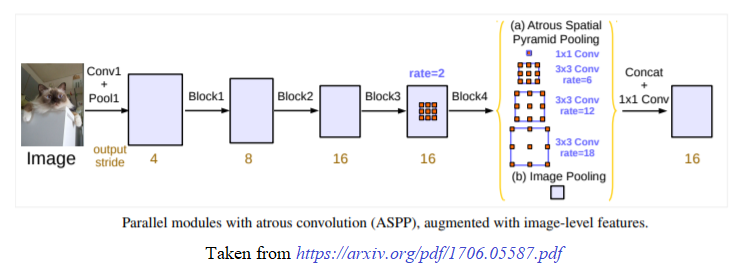

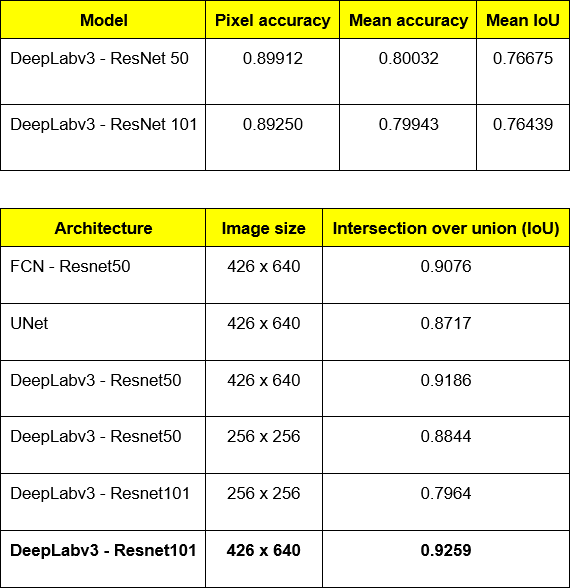

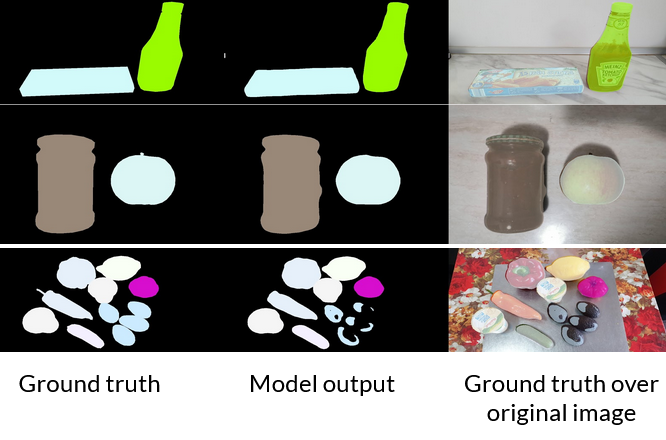

- We used the resulting dataset to train two types of models specialized in semantic segmentation: DeepLabv3 and UNet.

- To improve the performance of segmentation networks, we decided to use augmentation techniques.

- We used Albumentations to perform augmentation simultaneously for image and segmentation mask.

- We performed the augmentation based on the composition of the following types of transformations: CLAHE, ShiftScaleRotate, VerticalFlip, HorizontalFlip and RandomCrop.

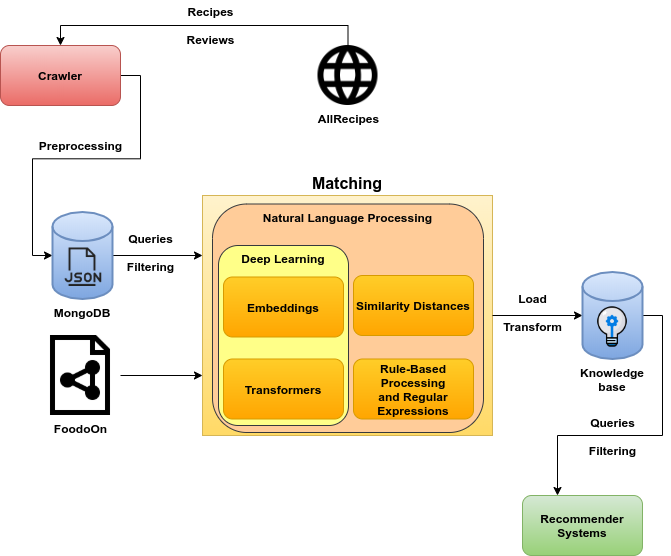

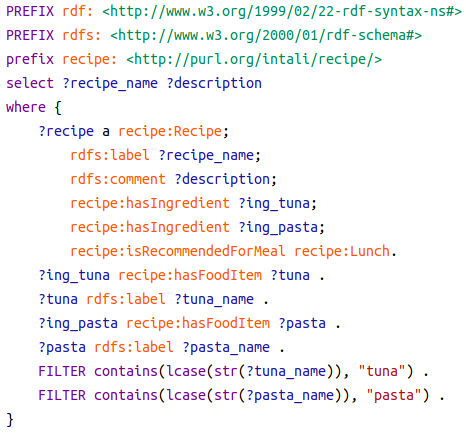

Recipe Extraction, Modeling and Querying Pipeline

- We created a crawler mechanism which uses AllRecipes API to crawl recipes and store data in a MongoDB database

- We also scrapped the Lidl retailer website in order to emulate the integration with a delivery service for our recommendation engine

- Scrap AllRecipes and Lidl:

- 66,200 recipes from AllRecipes

- 4,138,807 reviews for all the recipes

- 3,660 product descriptions from Lidl

- Technologies:

- Python programming language

- Selenium for scraping

- MongoDB for Storage

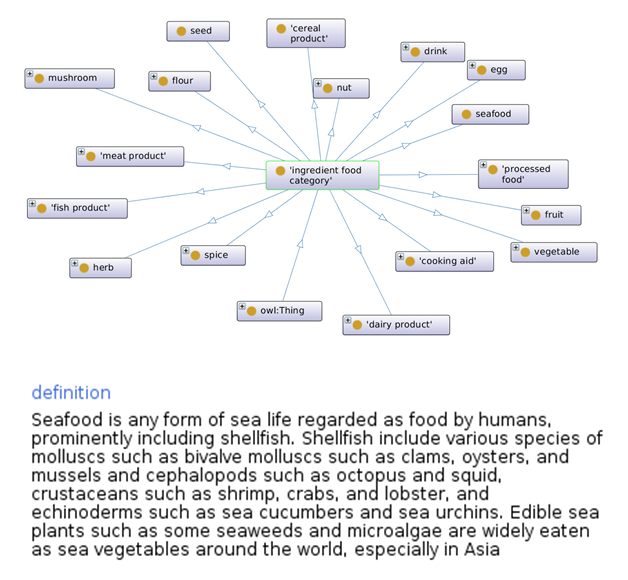

- We designed and implemented the IntAli Ontology (based on FoodOn) which facilitates semantic modeling: e.g. food category taxonomy, ingredient characteristics (quantity, nutritional information, etc).

- Map unstructured recipe data to semantic space automatically using classic (regular expressions, rule-based) and deep learning based NLP techniques.

- GraphDB knowledge base used to store semantically modelled recipes and answer queries results for Fast RCNN using the IntAli dataset.

Recipe Data Structure

- Our proposed schema for a recipe:

- Name

- Description

- Picture

- No. Servings

- Ingredients list including metrics and quantity and description

- Preparation Instructions and cooking time

- Labels

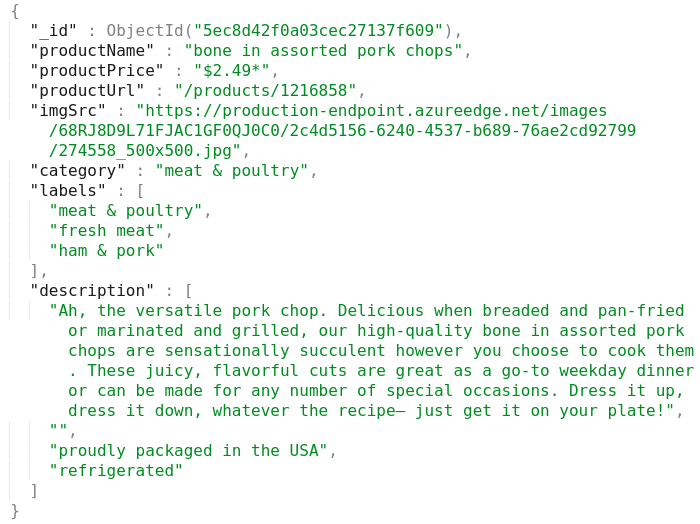

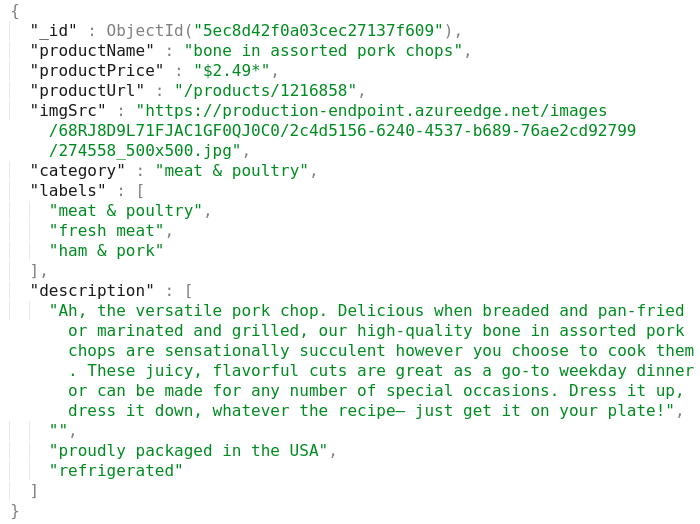

Product Data Structure

- Our proposed schema for a product:

- Product name

- Product price

- Image

- Category

- Labels

- Description

Review Data Structure

- Our proposed schema for a review

- ID for the reviewed recipe

- Rating

- Comment text

- Information about submitter

- Followers

- Number of recipes made

- Geo-location metadata

Data-Driven Approach - Datasets

- We have collected and aggregated datasets containing user reviews (and scores) for recipes.

- Externally collected dataset from kaggle:

- 180 000 recipes

- 700 000 reviews,

- 30 000 users

- 99.983% sparsity

- Internally collected dataset within the project scope (AllRecipes):

- 66 200 recipes.

- 4 138 000 reviews.

- 1 340 000 users.

- 99.9954% sparsity.

- Similar underlying structure and information used from both datasets: which user gave what score to which recipe. Text description for recipe title and recipe cooking steps.

Data-Driven Approach - Extra Features

- We have generated novel features using additional artificial neural models

- Created specialised culinary corpuses from recipe titles, descriptions or step by step instructions.

- Compressed natural text vocabulary using Byte Pair Encodings into a dictionary of about 31000 specialised tokens.

- Used BERT-based neural models to create numeric representations for natural text.

- Aggregated text-based descriptors for recipes in order to generate domain-specific representations.

Data-Driven Recommender Models

- Employed Collaborative-Filtering inspired algorithms.

- Cross-comparison between models using Mean Squared Error for all test reviews:

- Baseline with non-neural model, Non-Negative Matrix Factorization: 1.8 MSE score.

- Encoder-Decoder neural model: 0.75 MSE score.

- Neural Matrix Factorization using specialised BERT product representations: 0.7 MSE score.

- We used a dual head model to predict two different tasks.

- Employ an auxiliary task (Bayesian Personalised Ranking) in order to prevent-overfitting in internally collected dataset as its scarcity is one order of magnitude higher:

- Adapt the Neural Matrix Factorization architecture to use 2 prediction heads: one head for recipe score and one head for probability of viewing a recipe: 0.3 MSE score.

- Multiple available recommendation strategies: score based, interaction probability based, mixed strategy, mixed strategy with available ingredient weighting.

Smart-Fridge Prototype

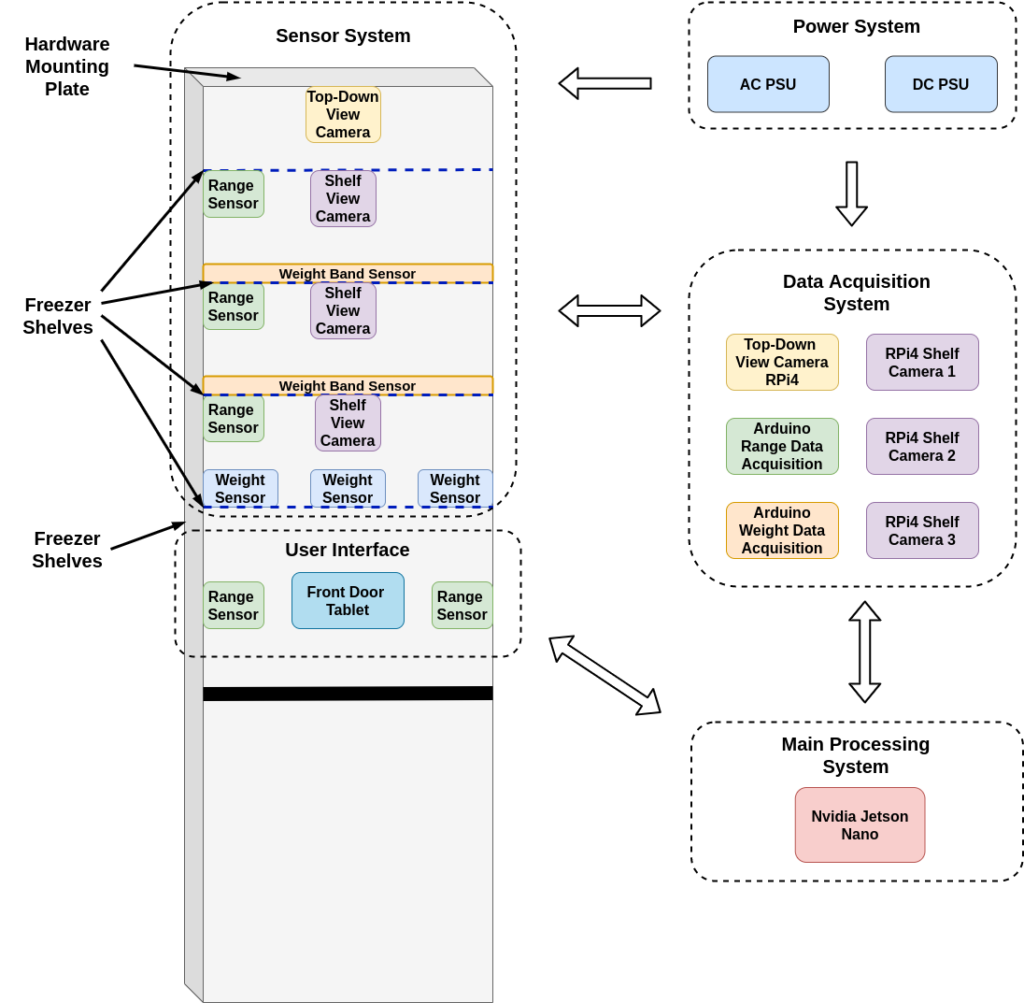

- We designed and started implementing a prototype smart-fridge that encompasses several sub-systems that work in conjunction to provide the backbone necessary to turn a normal off-the-shelf freezer into a smart appliance.

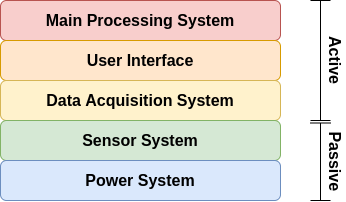

Smart-Fridge Subsystems

- Each sub-system is extensible and is responsible for a different function:

- Power subsystem sources the required power levels to each component in the system (sensor, SOC’s, cameras, etc.). Mainly composed of an AC to DC 12V@40A supply similar to the ones found in modern day computers and several step-down DC to DC converters to voltage levels required by each component in turn. This system is extensible in that it can seamlessly integrate power monitoring capabilities based on which brownout or power loss conditions can be detected and signaled to the end-user.

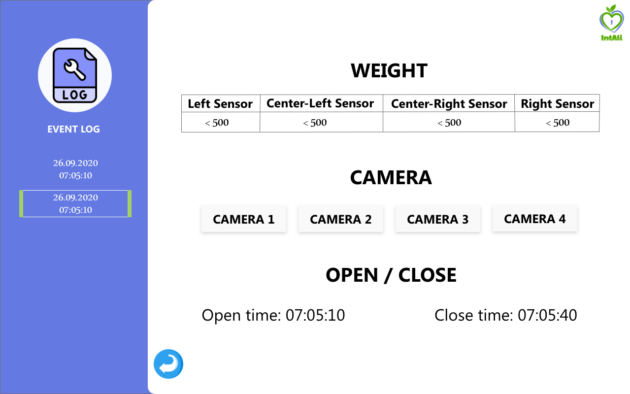

- Sensor subsystem integrates several different sensors, like weight sensors, distance sensors and cameras, which work in conjunction to provide the necessary information. This system is also seamlessly extensible in that it does not impose any constraints on integrating additional sensors. Hence, sensors such as temperature and light detection or backlight controlling relays can be added to this hardware build.

- Data Acquisition subsystem is the primary interface with the sensors and is split among different acquisition requirements necessary to have a stable and high measurement throughput. That is, information such as distance and weight are acquired through separate Arduino’s, whereas large size video information acquisition uses a separate RPi4 SOC for each camera in the system.

- The Main-Processing subsystem is implemented currently by a Jetson Nano Nvidia SOC and represents the main computing element for the current hardware build. It is interfaced with all other subsystems. This SOC was chosen due the availability of GPUs required for running deep models, its smaller size and lower power consumption. However, it is easy to accommodate or replace with more powerful hardware if needed or even to offload computation to a server or to cloud services.

- The hardware necessary for the User Interface is composed of a common Tablet device and two range sensors. From the hardware standpoint, it provides the backbone necessary for user-freezer interaction, namely: detecting the presence of a user, providing statues, alerts or recommendations. As such, on the hardware level the Tablet is directly interfaced with the main processing sub-system.

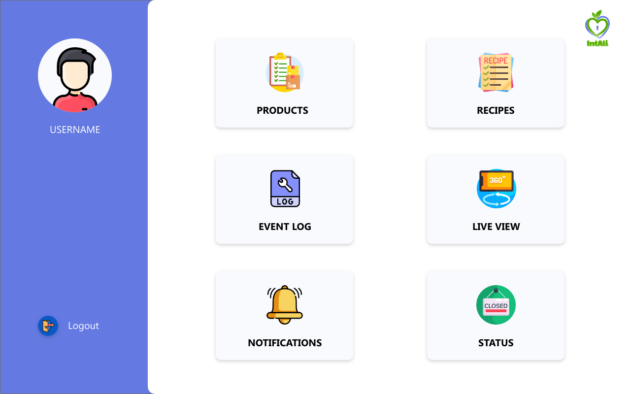

Smart-Fridge UI

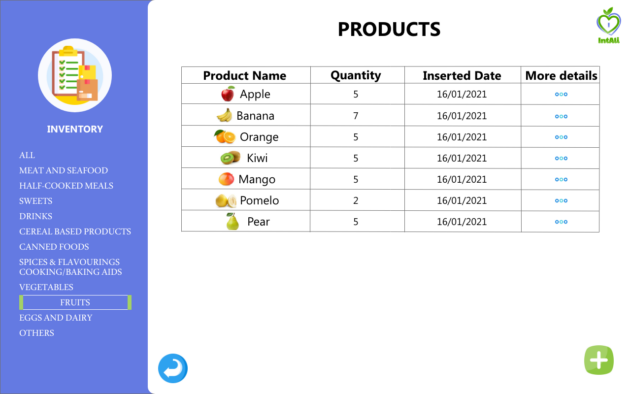

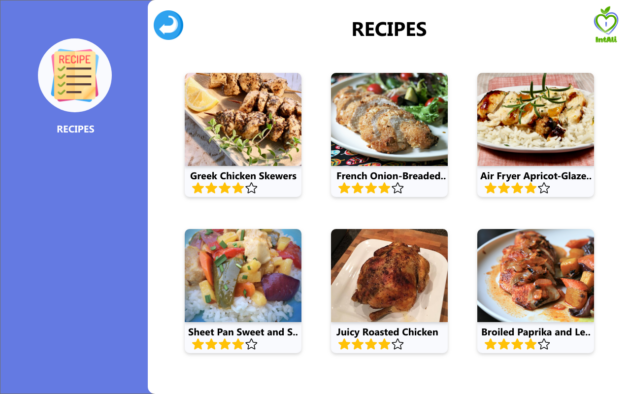

- The UI is built using an Android app running on the tablet.

- It allows the user to:

- View the product inventory.

- Get recipe recommendations.

- Give feedback on recipes.

- Visualize the activity log.

- See the inside of the fridge (live video view).

- Receive notifications and alerts.

Results

Semantic Segmentation - Quantitative results

Semantic segmentation - Qualitative Results

Scientific Publications

Coming soon

Team and Location

- The project has been funded through national grants for R&D (IntAli 20175/30.10.2019, part of NETIO P_40_270 53/05.09.2016) as a collaboration between University Politehnica” Bucharest (UPB) and Infosys.

- The R&D team is part of the AI-MAS Laboratory (Artificial Intelligence and Multi-Agent Systems) of the Faculty of Automatic Control and Computers, UPB.

- Implementation of the project has been performed in the PRECIS research building (where the smart-fridge is still located) in the UPB campus.

IntAli (20175/30.10.2019) | All rights are reserved, 2019-2021.